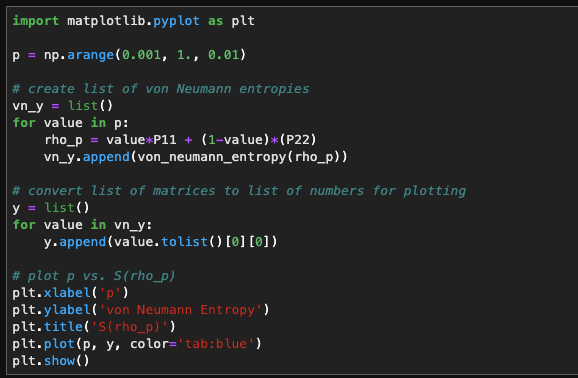

Calculating the EntropyĬalculating the information of a variable was developed by Claude Shannon, whose approach answers the question, how many “yes” or “no” questions would you expect to ask to get the correct answer?Ĭonsider flipping a coin. We will discuss the applications of entropy later in this article, but first we will dig into the theory of entropy and how to calculate it with the use of SciPy. Understanding this measurement is useful in machine learning in many cases, such as building decision trees or choosing the best classifier model. When we calculate statistical entropy, we are quantifying the amount of information in an event, variable, or distribution. In statistics, we borrow this concept as it easily applies to calculating probabilities.

In thermodynamics, entropy is explained as a state of uncertainty or randomness.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed